TL;DR

- Small language fashions (SLMs) are optimized generative AI options that provide cheaper and sooner options to large AI techniques, like ChatGPT

- Enterprises undertake SLMs as their entry level to generative AI because of decrease coaching prices, diminished infrastructure necessities, and faster ROI. These fashions additionally present enhanced knowledge safety and extra dependable outputs.

- Small language fashions ship focused worth throughout key enterprise features – from 24/7 buyer help to offline multilingual help, sensible ticket prioritization, safe doc processing, and different comparable duties

Everybody needs AI, however few know the place to begin.

Enterprises face evaluation paralysis with implementing AI successfully when large, costly fashions really feel like overkill for routine duties. Why deploy a $10 million resolution simply to reply FAQs or course of paperwork? The reality is, most companies don’t want boundless AI creativity; they want centered, dependable, and cost-efficient automation.

That’s the place small language fashions (SLMs) shine. They ship fast wins – sooner deployment, tighter knowledge management, and measurable return on funding (ROI) – with out the complexity or threat of outsized AI.

Let’s uncover what SLMs are, how they will help your small business, and methods to proceed with implementation.

What are small language fashions?

So, what does an SLM imply?

Small language fashions are optimized generative AI (Gen AI) instruments that ship quick, cost-efficient outcomes for particular enterprise duties, reminiscent of customer support or doc processing, with out the complexity of large techniques like ChatGPT. SLMs run affordably in your current infrastructure, permitting you to keep up safety and management and providing centered efficiency the place you want it most.

How SLMs work, and what makes them small

Small language fashions are designed to ship high-performance outcomes with minimal sources. Their compact measurement comes from these strategic optimizations:

- Targeted coaching knowledge. Small language fashions practice on curated datasets in your small business area, reminiscent of industry-specific content material and inner paperwork, somewhat than your complete web. This focused method eliminates irrelevant noise and sharpens efficiency in your actual use circumstances.

- Optimized structure. Every SLM is engineered for a particular enterprise perform. Each mannequin has simply sufficient layers and connections to excel at their designated duties, which makes them outperform bulkier options.

- Information distillation. Small language fashions seize the essence of bigger AI techniques via a “teacher-student” studying course of. They solely take probably the most impactful patterns from expansive LLMs, preserving what issues for his or her designated duties.

- Devoted coaching strategies. There are two main coaching strategies that assist SLMs to keep up focus:

- Pruning removes pointless elements of the mannequin. It systematically trims underutilized connections from the AI’s neural community. Very like pruning a fruit tree to spice up yield, this course of strengthens the mannequin’s core capabilities whereas eradicating wasteful parts.

- Quantization simplifies calculation, making it run sooner with out demanding costly, highly effective {hardware}. It converts the mannequin’s mathematical operations in a method that it makes use of complete numbers as an alternative of fractions.

Small language mannequin examples

Established tech giants like Microsoft, Google, IBM, and Meta have constructed their very own small language fashions. One SLM instance is DistilBert. This mannequin is predicated on Google’s Bert basis mannequin. DistilBert is 40% smaller and 60% sooner than its mum or dad mannequin whereas maintaining 97% of the LLM’s capabilities.

Different small language mannequin examples embrace:

- Gemma is an optimized model of Google’s Gemini

- GPT-4o mini is a distilled model of GPT-4o

- Phi is an SML go well with from Microsoft that accommodates a number of small language fashions

- Granite is the IBM’s massive language mannequin sequence that additionally accommodates domain-specific SLMs

SLM vs. LLM

You most likely hear about massive language fashions (LLMs) extra usually than small language fashions. So, how are they completely different? And when to make use of both one?

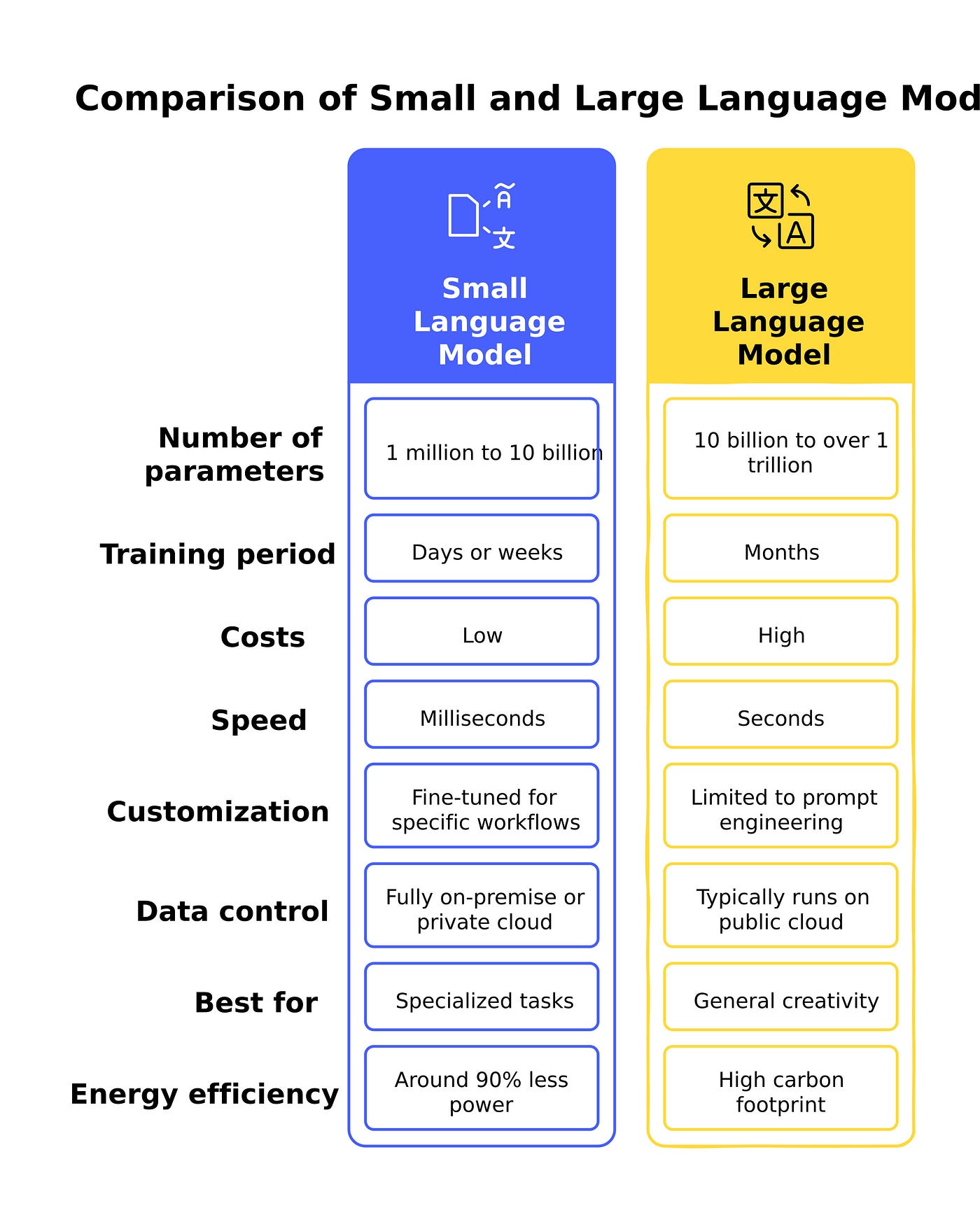

As introduced within the desk beneath, LLMs are a lot bigger and pricier than SLMs. They’re expensive to coach and use, and their carbon footprint could be very excessive. A single ChatGPT question consumes as a lot power as ten Google searches.

Moreover, LLMs have the historical past of subjecting corporations to embarrassing knowledge breaches. For example, Samsung prohibited workers from utilizing ChatGPT after it uncovered the corporate’s inner supply code.

An LLM is a Swiss military knife – versatile however cumbersome, whereas an SLM is a scalpel – smaller, sharper, and ideal for exact jobs.

The desk beneath presents an SLM vs. LLM comparability

Is one language mannequin higher than the opposite? The reply is – no. All of it depends upon your small business wants. SLMs permit you to rating fast wins. They’re sooner, cheaper to deploy and keep, and simpler to manage. Massive language fashions, then again, allow you to scale your small business when your use circumstances justify it. But when corporations use LLMs for each process that requires Gen AI, they’re working a supercomputer the place a workstation will do.

Why use small language fashions in enterprise?

Many forward-thinking corporations are adopting small language fashions as their first step into generative AI. These algorithms align completely with enterprise wants for effectivity, safety, and measurable outcomes. Choice-makers select SLM due to:

- Decrease entry limitations. Small language fashions require minimal infrastructure as they will run on current firm {hardware}. This eliminates the necessity for expensive GPU clusters or cloud charges.

- Sooner ROI. With deployment timelines measured in weeks somewhat than months, SLMs ship tangible worth rapidly. Their lean structure additionally permits speedy iterations based mostly on real-world suggestions.

- Knowledge privateness and compliance. Not like cloud-based LLMs, small language fashions can function totally on-premises or in personal cloud environments, maintaining delicate knowledge inside company management and simplifying regulatory compliance.

- Process-specific optimization. Skilled for centered use circumstances, SLMs outperform general-purpose fashions in accuracy, as they don’t include any irrelevant capabilities that might compromise efficiency.

- Future-proofing. Beginning with small language fashions builds inner AI experience with out overcommitment. As wants develop, these fashions could be augmented or built-in with bigger techniques.

- Decreased hallucination. Their slim coaching scope makes small language fashions much less susceptible to producing false info.

When do you have to use small language fashions?

SLMs provide quite a few tangible advantages, and lots of corporations desire to make use of them as their gateway to generative AI. However there are eventualities the place small language fashions usually are not an excellent match. For example, a process that requires creativity and multidisciplinary data will profit extra from LLMs, particularly if the price range permits it.

In case you nonetheless have doubts about whether or not an SLM is appropriate for the duty at hand, contemplate the picture beneath.

Key small language fashions use circumstances

SLMs are the perfect resolution when companies want cost-effective AI for specialised duties the place precision, knowledge management, and speedy deployment matter most. Listed here are 5 use circumstances the place small language fashions are an awesome match:

- Buyer help automation. SLMs deal with steadily requested questions, ticket routing, and routine buyer interactions throughout electronic mail, chat, and voice channels. They cut back the workload on human workers whereas responding to clients 24/7.

- Inner data base help. Skilled on firm documentation, insurance policies, and procedures, small language fashions function on-demand assistants for workers. They supply fast entry to correct info for HR, IT, and different departments.

- Assist ticket classification prioritization. Not like generic fashions, small language fashions fine-tuned on historic help tickets can categorize and prioritize points extra successfully. They guarantee that crucial tickets are processed promptly, decreasing response instances and rising person satisfaction.

- Multilingual help in resource-constrained environments. SLMs allow fundamental translation in areas the place cloud-based AI could also be impractical, like in offline or low-connectivity settings, reminiscent of manufacturing websites or distant places of work.

- Regulatory doc processing. Industries with strict compliance necessities use small language fashions to assessment contracts, extract key clauses, and generate studies. Their capability to function on-premises makes them ultimate for dealing with delicate authorized and monetary documentation.

Actual-life examples of corporations utilizing SLMs

Ahead-thinking enterprises throughout completely different sectors are already experimenting with small language fashions and seeing outcomes. Check out these examples for inspiration:

Rockwell Automation

This US-based industrial automation chief deployed Microsoft’s Phi-3 small language mannequin to empower machine operators with on the spot entry to manufacturing experience. By querying the mannequin with pure language, technicians rapidly troubleshoot gear and entry procedural data – all with out leaving their workstations.

Cerence Inc.

Cerence Inc., a software program growth firm specializing in AI-assisted interplay applied sciences for the automotive sector, has lately launched CaLLM Edge. It is a small language mannequin embedded into Cerence’s automotive software program that drivers can entry with out cloud connectivity. It will possibly react to a driver’s instructions, seek for locations, and help in navigation.

Bayer

This life science big constructed its small language mannequin for agriculture – E.L.Y. This SLM can reply troublesome agronomic questions and assist farmers make choices in actual time. Many agricultural professionals already use E.L.Y. of their every day duties with tangible productiveness features. They report saving as much as 4 hours per week and attaining a 40% enchancment in resolution accuracy.

Epic Programs

Epic Programs, a serious healthcare software program supplier, studies adopting Phi-3 in its affected person help system. This SLM operates on premises, maintaining delicate well being info protected and complying with HIPAA.

How you can undertake small language fashions: a step-by-step information for enterprises

To reiterate, for enterprises seeking to harness AI with out extreme complexity or value, SLMs present a sensible, results-driven pathway. This part affords a strategic framework for profitable SLM adoption – from preliminary evaluation to organization-wide scaling.

Step 1: Align AI technique with enterprise worth

Earlier than diving into implementation, align your AI technique with clear enterprise goals.

- Determine high-impact use circumstances. Concentrate on repetitive, rules-based duties the place specialised AI excels, reminiscent of buyer help ticket routing, contract clause extraction, HR coverage queries, and many others. Keep away from over-engineering; begin with processes which have measurable inefficiencies.

- Consider knowledge readiness. SLMs require clear, structured datasets. Evaluation the standard and accessibility of your knowledge, reminiscent of help tickets and different inner content material. In case your data base is fragmented, prioritize cleanup earlier than mannequin coaching. If an organization lacks inner experience, contemplate hiring an exterior knowledge guide that can assist you craft an efficient knowledge technique. This initiative will repay sooner or later.

- Safe stakeholder buy-in. Have interaction division heads early to determine ache factors and outline success metrics.

Step 2: Pilot strategically

A centered pilot minimizes threat whereas demonstrating early ROI.

- Launch in a managed setting. Deploy a small language mannequin for a single workflow, like automating worker onboarding questions, the place errors are low-stakes however financial savings are tangible. Use pre-trained fashions, reminiscent of Microsoft Phi-3 and Mistral 7B, to speed up deployment.

- Prioritize compliance and safety. Go for personal SLM deployments to maintain delicate knowledge in-house. Contain authorized and compliance groups from the begin to tackle GDPR, HIPAA, and AI transparency.

- Set clear KPIs. Monitor measurable metrics like question decision pace, value per interplay, and person satisfaction.

You too can start with AI proof-of-concept (PoC) growth. It lets you validate your speculation on a fair smaller scale. Yow will discover extra info in our information on how AI PoC can assist you succeed.

Step 3: Scale strategically

With pilot success confirmed, broaden small language mannequin adoption systematically.

- Broaden to adjoining features. As soon as validated in a single area, apply the mannequin to different associated duties.

- Construct hybrid AI techniques. Create an AI system the place completely different AI-powered instruments cooperate collectively. In case your small language mannequin can accomplish 80% of the duties, reroute the remaining visitors to cloud-based LLMs or different AI instruments.

- Assist your workers. Prepare groups to fine-tune prompts, replace datasets, and monitor outputs. Empowering non-technical workers with no-code instruments or dashboards accelerates adoption throughout departments.

Step 4: Optimize for enduring impression

Deal with your SLM deployment as a dwelling system, not a one-off initiative.

- Monitor efficiency rigorously. Monitor error charges, person suggestions, and price effectivity. Use A/B testing to match small language mannequin outputs to human or LLM benchmarks.

- Retrain with new datasets. Replace fashions often with new paperwork, terminology, help tickets, or some other related knowledge. It will stop “idea drift” by aligning the SLM with evolving enterprise language.

- Elicit suggestions. Encourage workers and clients to flag inaccuracies or recommend mannequin enhancements.

Conclusion: sensible AI begins small

Small language fashions signify probably the most pragmatic entry level for enterprises exploring generative AI. As this text reveals, SLMs ship focused, cost-effective, and safe AI capabilities with out the overhead of large language fashions.

For the adoption course of to go easily, it’s important to workforce up with a dependable generative AI growth companion.

What makes ITRex your ultimate AI companion?

ITRex is an AI-native firm that makes use of the know-how to hurry up manufacturing and supply cycles. We pleasure ourselves on utilizing AI to boost our workforce’s effectivity whereas sustaining shopper confidentiality.

We differentiate ourselves via:

- Providing a workforce of execs with various experience. Now we have Gen AI consultants, software program engineers, knowledge governance consultants, and an progressive R&D workforce.

- Constructing clear, auditable AI fashions. With ITRex, you gained’t get caught with a “black field” resolution. We develop explainable AI fashions that justify their output and adjust to regulatory requirements like GDPR and HIPAA.

- Delivering future-ready structure. We design small language fashions that seamlessly combine with LLMs or multimodal AI when your wants evolve – no rip-and-replace required.

Schedule your discovery name at this time, and we’ll determine your highest-impact alternatives. Subsequent, you’ll obtain a tailor-made proposal with clear timelines, milestones, and price breakdown, enabling us to launch your AI venture instantly upon approval.

FAQs

- How do small language fashions differ from massive language fashions?

Small language fashions are optimized for particular duties, whereas massive language fashions deal with broad, inventive duties. SLMs don’t want specialised infrastructure as they run effectively on current {hardware}, whereas LLMs want cloud connection or GPU clusters. SLMs provide stronger knowledge management by way of on-premise deployment, in contrast to cloud-dependent LLMs. Their centered coaching reduces hallucinations, making SLMs extra dependable for structured workflows like doc processing.

- What are the principle use circumstances for small language fashions?

SLMs excel in repetitive duties, together with however not restricted to buyer help automation, reminiscent of FAQ dealing with and ticket routing; inner data help, like HR and IT queries; and regulatory doc assessment. They’re ultimate for multilingual help in offline environments (e.g., manufacturing websites). Industries like healthcare use small language fashions for HIPAA-compliant affected person knowledge processing.

- Is it doable to make use of each small and enormous language fashions collectively?

Sure, hybrid AI techniques mix SLMs for routine duties with LLMs for complicated exceptions. For instance, small language fashions can deal with normal buyer queries, escalating solely nuanced points to an LLM. This method balances value and adaptability.

Prepared for an SLM that really works for your small business? Let ITRex design your precision AI mannequin – get in contact at this time.

Initially revealed at https://itrexgroup.com on Might 14, 2025.

The put up Small language fashions (SLMs): a Smarter Method to Get Began with Generative AI appeared first on Datafloq.