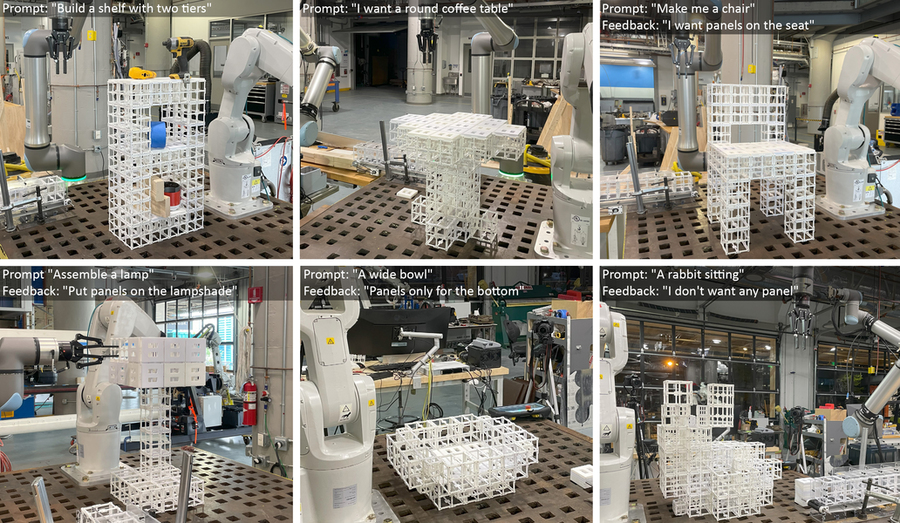

Given the immediate “Make me a chair” and suggestions “I need panels on the seat,” the robotic assembles a chair and locations panel elements based on the person immediate. Picture credit score: Courtesy of the researchers.

By Adam Zewe

Pc-aided design (CAD) programs are tried-and-true instruments used to design lots of the bodily objects we use every day. However CAD software program requires in depth experience to grasp, and plenty of instruments incorporate such a excessive stage of element they don’t lend themselves to brainstorming or fast prototyping.

In an effort to make design quicker and extra accessible for non-experts, researchers from MIT and elsewhere developed an AI-driven robotic meeting system that permits individuals to construct bodily objects by merely describing them in phrases.

Their system makes use of a generative AI mannequin to construct a 3D illustration of an object’s geometry based mostly on the person’s immediate. Then, a second generative AI mannequin causes in regards to the desired object and figures out the place completely different elements ought to go, based on the item’s operate and geometry.

The system can mechanically construct the item from a set of prefabricated components utilizing robotic meeting. It could possibly additionally iterate on the design based mostly on suggestions from the person.

The researchers used this end-to-end system to manufacture furnishings, together with chairs and cabinets, from two varieties of premade elements. The elements might be disassembled and reassembled at will, decreasing the quantity of waste generated via the fabrication course of.

They evaluated these designs via a person research and located that greater than 90 p.c of contributors most popular the objects made by their AI-driven system, as in comparison with completely different approaches.

Whereas this work is an preliminary demonstration, the framework might be particularly helpful for fast prototyping advanced objects like aerospace elements and architectural objects. In the long term, it might be utilized in houses to manufacture furnishings or different objects regionally, with out the necessity to have cumbersome merchandise shipped from a central facility.

“Eventually, we would like to have the ability to talk and discuss to a robotic and AI system the identical means we discuss to one another to make issues collectively. Our system is a primary step towards enabling that future,” says lead creator Alex Kyaw, a graduate pupil within the MIT departments of Electrical Engineering and Pc Science (EECS) and Structure.

Kyaw is joined on the paper by Richa Gupta, an MIT structure graduate pupil; Faez Ahmed, affiliate professor of mechanical engineering; Lawrence Sass, professor and chair of the Computation Group within the Division of Structure; senior creator Randall Davis, an EECS professor and member of the Pc Science and Synthetic Intelligence Laboratory (CSAIL); in addition to others at Google Deepmind and Autodesk Analysis. The paper was lately introduced on the Convention on Neural Data Processing Programs.

Producing a multicomponent design

Whereas generative AI fashions are good at producing 3D representations, generally known as meshes, from textual content prompts, most don’t produce uniform representations of an object’s geometry which have the component-level particulars wanted for robotic meeting.

Separating these meshes into elements is difficult for a mannequin as a result of assigning elements is determined by the geometry and performance of the item and its components.

The researchers tackled these challenges utilizing a vision-language mannequin (VLM), a strong generative AI mannequin that has been pre-trained to grasp photographs and textual content. They activity the VLM with determining how two varieties of prefabricated components, structural elements and panel elements, ought to match collectively to kind an object.

“There are a lot of methods we will put panels on a bodily object, however the robotic must see the geometry and motive over that geometry to decide about it. By serving as each the eyes and mind of the robotic, the VLM allows the robotic to do that,” Kyaw says.

A person prompts the system with textual content, maybe by typing “make me a chair,” and provides it an AI-generated picture of a chair to begin.

Then, the VLM causes in regards to the chair and determines the place panel elements go on high of structural elements, based mostly on the performance of many instance objects it has seen earlier than. As an illustration, the mannequin can decide that the seat and backrest ought to have panels to have surfaces for somebody sitting and leaning on the chair.

It outputs this info as textual content, equivalent to “seat” or “backrest.” Every floor of the chair is then labeled with numbers, and the knowledge is fed again to the VLM.

Then the VLM chooses the labels that correspond to the geometric components of the chair that ought to obtain panels on the 3D mesh to finish the design.

These six photographs present the Textual content to robotic meeting of multi-component objects from completely different person prompts. Credit score: Courtesy of the researchers.

These six photographs present the Textual content to robotic meeting of multi-component objects from completely different person prompts. Credit score: Courtesy of the researchers.

Human-AI co-design

The person stays within the loop all through this course of and may refine the design by giving the mannequin a brand new immediate, equivalent to “solely use panels on the backrest, not the seat.”

“The design area may be very massive, so we slim it down via person suggestions. We consider that is the easiest way to do it as a result of individuals have completely different preferences, and constructing an idealized mannequin for everybody could be unimaginable,” Kyaw says.

“The human‑in‑the‑loop course of permits the customers to steer the AI‑generated designs and have a way of possession within the remaining outcome,” provides Gupta.

As soon as the 3D mesh is finalized, a robotic meeting system builds the item utilizing prefabricated components. These reusable components might be disassembled and reassembled into completely different configurations.

The researchers in contrast the outcomes of their technique with an algorithm that locations panels on all horizontal surfaces which might be going through up, and an algorithm that locations panels randomly. In a person research, greater than 90 p.c of people most popular the designs made by their system.

In addition they requested the VLM to clarify why it selected to place panels in these areas.

“We realized that the imaginative and prescient language mannequin is ready to perceive some extent of the practical points of a chair, like leaning and sitting, to grasp why it’s putting panels on the seat and backrest. It isn’t simply randomly spitting out these assignments,” Kyaw says.

Sooner or later, the researchers wish to improve their system to deal with extra advanced and nuanced person prompts, equivalent to a desk made out of glass and steel. As well as, they wish to incorporate extra prefabricated elements, equivalent to gears, hinges, or different transferring components, so objects might have extra performance.

“Our hope is to drastically decrease the barrier of entry to design instruments. We’ve got proven that we will use generative AI and robotics to show concepts into bodily objects in a quick, accessible, and sustainable method,” says Davis.

MIT Information