It’s typically stated that supercomputers of some many years in the past pack much less energy than right now’s sensible watches. Now we now have an organization, Tiiny AI Inc., claiming to have constructed the world’s smallest private AI supercomputer that may run a 120-billion-parameter giant language mannequin on-device — with out cloud connectivity, servers or GPUs.

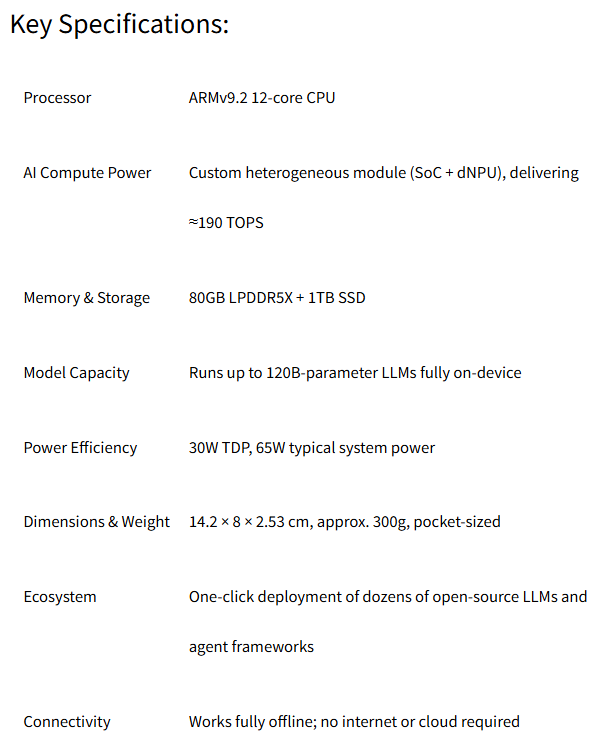

The corporate stated the Arm-based product is powered by two know-how advances that make large-parameter LLMs viable on a compact machine.

- TurboSparse, a neuron-level sparse activation method, improves inference effectivity whereas sustaining full mannequin intelligence.

- PowerInfer, an open-source heterogeneous inference engine with greater than 8,000 GitHub stars, accelerates heavy LLM workloads dynamically distributing computation throughout CPU and NPU, enabling sever-grade efficiency at a fraction of conventional energy consumption.

Designed for energy-efficient private intelligence, Tiiny AI Pocket Lab runs inside a 65W energy envelope and permits large-model efficiency at a fraction of the vitality and carbon footprint of conventional GPU-based programs, Tiiny stated.

The corporate stated the bottleneck in right now’s AI ecosystem shouldn’t be computing energy — it’s dependence on the cloud.

“Cloud AI has introduced exceptional progress, however it additionally created dependency, vulnerability, and sustainability challenges,” stated Samar Bhoj, GTM Director of Tiiny AI. “With Tiiny AI Pocket Lab, we imagine intelligence shouldn’t belong to knowledge facilities, however to folks. This is step one towards making superior AI actually accessible, non-public, and private, by bringing the ability of enormous fashions from the cloud to each particular person machine.”

“The machine represents a serious shift within the trajectory of the AI business,” the corporate stated. “As cloud-based AI more and more struggles with sustainability considerations, rising vitality prices, international outages, the prohibitive prices of long-context processing, and rising privateness dangers, Tiiny AI introduces an alternate mannequin centered on private, moveable, and absolutely non-public intelligence.”

The machine has been verified by Guinness World Information below the class The Smallest MiniPC (100B LLM Regionally), in accordance with the corporate.

The corporate calls itself a “U.S.-based deep tech AI startup,” whereas its announcement has a Hong Kong dateline. The corporate was fashioned in 2024 and brings collectively engineers from MIT, Stanford, HKUST, SJTU, Intel, and Meta, AI inference and {hardware}–software program co-design. Their analysis has been revealed in educational conferences together with SOSP, OSDI, ASPLOS, and EuroSys. In 2025, Tiiny AI secured a multi-million greenback seed spherical from main international buyers in accordance with the corporate.

Tiiny AI Pocket Lab is designed to assist main private AI use instances, serving builders, researchers, creators, professionals, and college students. It permits multi-step reasoning, deep context understanding, agent workflows, content material technology, and safe processing of delicate info — even with out web entry. The machine additionally supplies true long-term private reminiscence by storing consumer knowledge, preferences, and paperwork regionally with bank-level encryption, providing a degree of privateness and persistence that cloud-based AI programs can not present.

Tiiny AI Pocket Lab operates within the ‘golden zone’ of private AI (10B–100B parameters), which satisfies greater than 80 p.c of real-world wants, in accordance with the corporate. It helps fashions scaling as much as 120B LLM, delivering intelligence ranges corresponding to GPT-4o. This permits PhD-level reasoning, multi-step evaluation, and deep contextual understanding — however with the safety of absolutely offline, on-device processing.

The corporate stated the machine helps one-click set up of in style open-source fashions together with OpenAI GPT-OSS, Llama, Qwen, DeepSeek, Mistral, and Phi, and permits deployment of open-source AI agents equivalent to OpenManus, ComfyUI, Flowise, Presenton, Libra, Bella, and SillyTavern. Customers obtain steady updates, together with official OTA {hardware} upgrades. The above options shall be launched at CES in January 2026.