On the planet of machine studying, we obsess over mannequin architectures, coaching pipelines, and hyper-parameter tuning, but typically overlook a basic facet: how our options stay and breathe all through their lifecycle. From in-memory calculations that vanish after every prediction to the problem of reproducing precise function values months later, the best way we deal with options could make or break our ML techniques’ reliability and scalability.

Who Ought to Learn This

- ML engineers evaluating their function administration strategy

- Information scientists experiencing training-serving skew points

- Technical leads planning to scale their ML operations

- Groups contemplating Characteristic Retailer implementation

Beginning Level: The invisible strategy

Many ML groups, particularly these of their early levels or with out devoted ML engineers, begin with what I name “the invisible strategy” to function engineering. It’s deceptively easy: fetch uncooked knowledge, rework it in-memory, and create options on the fly. The ensuing dataset, whereas purposeful, is basically a black field of short-lived calculations — options that exist just for a second earlier than vanishing after every prediction or coaching run.

Whereas this strategy might sound to get the job accomplished, it’s constructed on shaky floor. As groups scale their ML operations, fashions that carried out brilliantly in testing all of a sudden behave unpredictably in manufacturing. Options that labored completely throughout coaching mysteriously produce totally different values in stay inference. When stakeholders ask why a particular prediction was made final month, groups discover themselves unable to reconstruct the precise function values that led to that call.

Core Challenges in Characteristic Engineering

These ache factors aren’t distinctive to any single staff; they symbolize basic challenges that each rising ML staff finally faces.

- Observability

With out materialized options, debugging turns into a detective mission. Think about making an attempt to grasp why a mannequin made a particular prediction months in the past, solely to seek out that the options behind that call have lengthy since vanished. Options observability additionally permits steady monitoring, permitting groups to detect deterioration or regarding developments of their function distributions over time. - Time limit correctness

When options utilized in coaching don’t match these generated throughout inference, resulting in the infamous training-serving skew. This isn’t nearly knowledge accuracy — it’s about making certain your mannequin encounters the identical function computations in manufacturing because it did throughout coaching. - Reusability

Repeatedly computing the identical options throughout totally different fashions turns into more and more wasteful. When function calculations contain heavy computational sources, this inefficiency isn’t simply an inconvenience — it’s a major drain on sources.

Evolution of Options

Strategy 1: On-Demand Characteristic Technology

The only resolution begins the place many ML groups start: creating options on demand for instant use in prediction. Uncooked knowledge flows by way of transformations to generate options, that are used for inference, and solely then — after predictions are already made — are these options sometimes saved to parquet recordsdata. Whereas this methodology is simple, with groups typically selecting parquet recordsdata as a result of they’re easy to create from in-memory knowledge, it comes with limitations. The strategy partially solves observability since options are saved, however analyzing these options later turns into difficult — querying knowledge throughout a number of parquet recordsdata requires particular instruments and cautious group of your saved recordsdata.

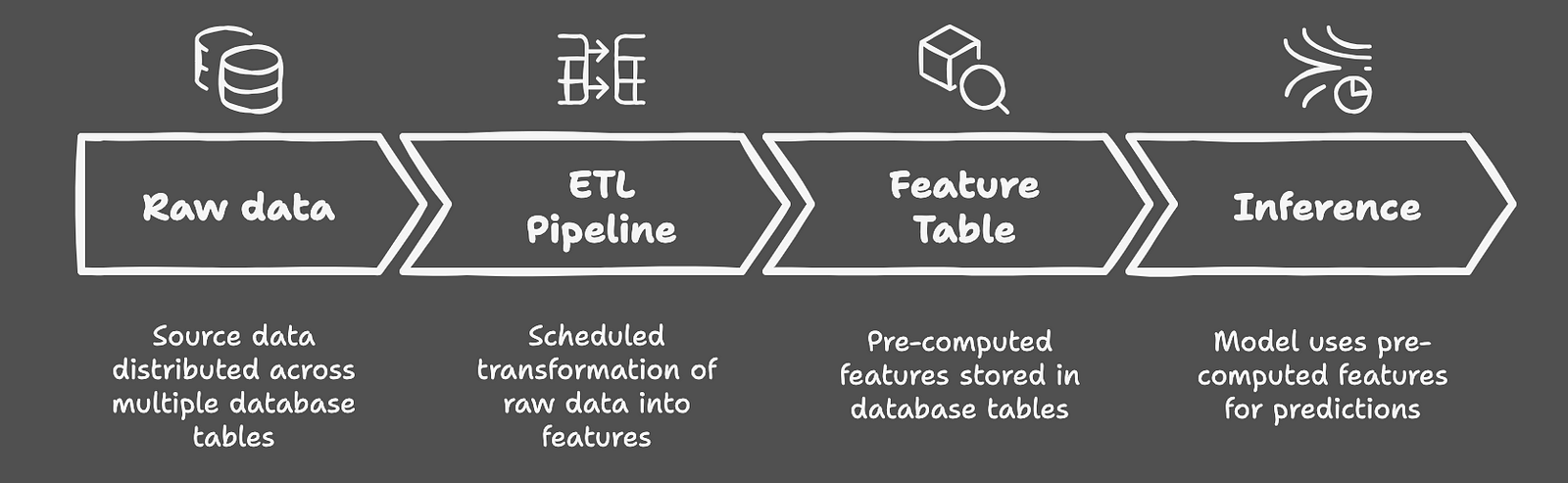

Strategy 2: Characteristic Desk Materialization

As groups evolve, many transition to what’s generally mentioned on-line as an alternative choice to full-fledged function shops: function desk materialization. This strategy leverages present knowledge warehouse infrastructure to remodel and retailer options earlier than they’re wanted. Consider it as a central repository the place options are persistently calculated by way of established ETL pipelines, then used for each coaching and inference. This resolution elegantly addresses point-in-time correctness and observability — your options are all the time accessible for inspection and persistently generated. Nonetheless, it exhibits its limitations when coping with function evolution. As your mannequin ecosystem grows, including new options, modifying present ones, or managing totally different variations turns into more and more advanced — particularly because of constraints imposed by database schema evolution.

Strategy 3: Characteristic Retailer

On the far finish of the spectrum lies the function retailer — sometimes a part of a complete ML platform. These options supply the total package deal: function versioning, environment friendly on-line/offline serving, and seamless integration with broader ML workflows. They’re the equal of a well-oiled machine, fixing our core challenges comprehensively. Options are version-controlled, simply observable, and inherently reusable throughout fashions. Nonetheless, this energy comes at a major value: technological complexity, useful resource necessities, and the necessity for devoted ML Engineering experience.

Making the Proper Selection

Opposite to what trending ML weblog posts may recommend, not each staff wants a function retailer. In my expertise, function desk materialization typically gives the candy spot — particularly when your group already has sturdy ETL infrastructure. The secret is understanding your particular wants: in case you’re managing a number of fashions that share and continuously modify options, a function retailer may be well worth the funding. However for groups with restricted mannequin interdependence or these nonetheless establishing their ML practices, less complicated options typically present higher return on funding. Positive, you may follow on-demand function era — if debugging race situations at 2 AM is your thought of an excellent time.

The choice finally comes all the way down to your staff’s maturity, useful resource availability, and particular use instances. Characteristic shops are highly effective instruments, however like all refined resolution, they require important funding in each human capital and infrastructure. Typically, the pragmatic path of function desk materialization, regardless of its limitations, gives one of the best stability of functionality and complexity.

Bear in mind: success in ML function administration isn’t about selecting probably the most refined resolution, however discovering the best match to your staff’s wants and capabilities. The secret is to actually assess your wants, perceive your limitations, and select a path that allows your staff to construct dependable, observable, and maintainable ML techniques.