A Tesla Optimus humanoid robotic walks by a manufacturing unit with individuals. Predictable robotic habits requires priority-based management and a authorized framework. Credit score: Tesla

Robots have gotten smarter and extra predictable. Tesla Optimus lifts packing containers in a manufacturing unit, Determine 01 pours espresso, and Waymo carries passengers and not using a driver. These applied sciences are now not demonstrations; they’re more and more getting into the true world.

However with this comes the central query: How can we make sure that a robotic will make the precise resolution in a fancy state of affairs? What occurs if it receives two conflicting instructions from completely different individuals on the identical time? And the way can we be assured that it’s going to not violate fundamental security guidelines—even on the request of its proprietor?

Why do standard programs fail? Most trendy robots function on predefined scripts — a set of instructions and a set of reactions. In engineering phrases, these are habits bushes, finite-state machines, or generally machine studying. These approaches work nicely in managed circumstances, however instructions in the true world could contradict each other.

As well as, environments could change quicker than the robotic can adapt, and there’s no clear “precedence map” of what issues right here and now. Because of this, the system could hesitate or select the flawed state of affairs. Within the case of an autonomous automotive or a humanoid robotic, such a predictable hesitation is now not simply an error—it’s a security danger.

From reactivity to priority-based management

At present, most autonomous programs are reactive—they reply to exterior occasions and instructions as in the event that they have been equally necessary. The robotic receives a sign, retrieves an identical state of affairs from reminiscence, and executes it, with out contemplating the way it suits into a bigger objective.

Because of this, predictable instructions and occasions compete on the identical stage of precedence. Lengthy-term duties are simply interrupted by rapid stimuli, and in a fancy atmosphere, the robotic could flail, attempting to fulfill each enter sign.

Past such issues in routine operation, there may be all the time the chance of technical failures. For instance, in the course of the first World Humanoid Robotic Video games in Beijing this month, the H1 robotic from Unitree deviated from its optimum path and knocked a human participant to the bottom.

An identical case had occurred earlier in China: Throughout upkeep work, a robotic all of a sudden started flailing its arms chaotically, putting engineers till it was disconnected from energy.

Each incidents clearly exhibit that trendy autonomous programs typically react with out analyzing penalties. Within the absence of contextual prioritization, even a trivial technical fault can escalate right into a harmful state of affairs.

Architectures with out built-in logic for security priorities and administration of interacts with topics — equivalent to people, robots, and objects — supply no safety in opposition to such eventualities.

My workforce designed an structure to rework habits from a “stimulus-response” mode into deliberate selection. Each occasion first passes by mission and topic filters, is evaluated within the context of atmosphere and penalties, and solely then proceeds to execution. This permits robots to behave predictably, persistently, and safely—even in dynamic and unpredictable circumstances.

Two hierarchies: Priorities in motion

We designed a management structure that immediately addresses predictable robotics and reactivity. At its core are two interlinked hierarchies.

1. Mission hierarchy — A structured system of objective priorities:

- Strategic missions — basic and unchangeable: “Don’t hurt a human,” “Help people,” “Obey the principles.”

- Person missions — duties set by the proprietor or operator

- Present missions — secondary duties that may be interrupted for extra necessary ones

2. Hierarchy of interplay topics — The prioritization of instructions and interactions relying on supply:

- Highest precedence — proprietor, administrator, operator

- Secondary — licensed customers, equivalent to members of the family, staff, or assigned robots

- Exterior events — different individuals, animals, or robots who’re thought of in situational evaluation however can not management the system

How predictable management works in follow

Case 1. Humanoid robotic — A robotic is carrying components on an meeting line. A toddler from a visiting tour group asks it at hand over a heavy device. The request comes from an exterior occasion. The mission is probably unsafe and never a part of present duties.

- Resolution: Ignore the command and proceed work.

- End result: Each the kid and the manufacturing course of stay protected.

Case 2. Autonomous automotive — A passenger asks to hurry as much as keep away from being late. Sensors detect ice on the street. The request comes from a high-priority topic. However the strategic mission “guarantee security” outweighs comfort.

- Resolution: The automotive doesn’t enhance velocity and recalculates the route.

- End result: Security has absolute precedence, even when inconvenient to the person.

Three filters of predictable decision-making

Each command passes by three ranges of verification:

- Context — atmosphere, robotic state, occasion historical past

- Criticality — how harmful the motion can be

- Penalties — what’s going to change if the command is executed or refused

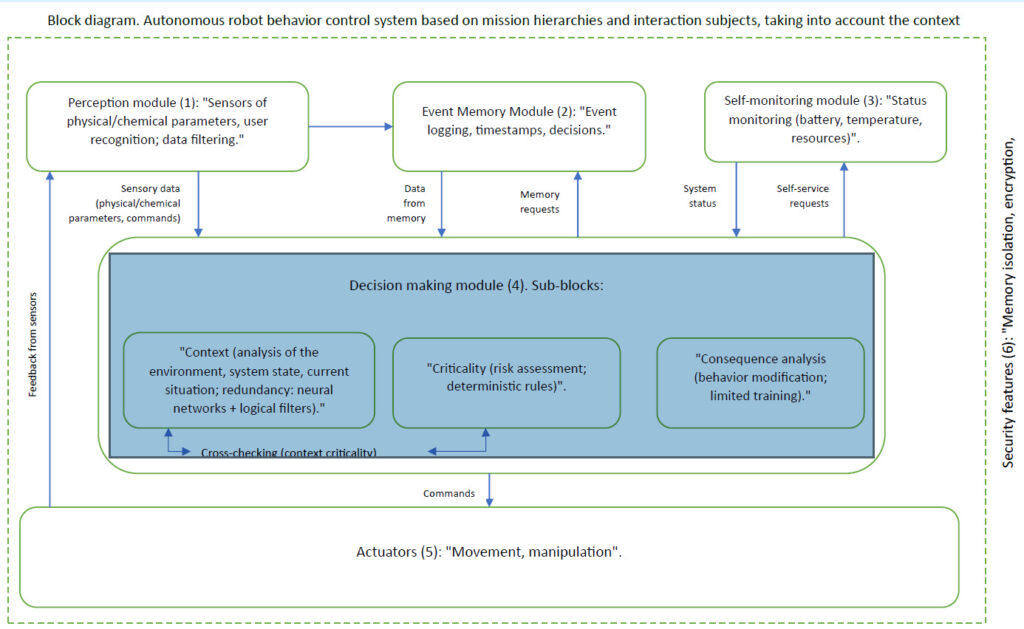

If any filter raises an alarm, the choice is reconsidered. Technically, the structure is carried out in line with the block diagram under:

A management structure to deal with robotic reactivity. (Click on right here to enlarge.) Supply: Zhengis Tileubay

Authorized facet: Impartial-autonomous standing

We went past technical structure and suggest a brand new authorized mannequin. For exact understanding, it have to be described in formal authorized language. “Impartial-autonomous standing” of AI and AI-powered autonomous programs is a legally acknowledged class wherein such programs are regarded neither as objects of conventional obligation like instruments, nor as topics of regulation, like pure or authorized individuals.

This standing introduces a brand new authorized class that eliminates uncertainty in AI regulation and avoids excessive approaches to defining its authorized nature. Trendy authorized programs function with two primary classes:

- Topics of regulation — pure and authorized individuals with rights and obligations

- Objects of regulation — issues, instruments, property, and intangible property managed by topics

AI and autonomous programs don’t match both class. If thought of objects, all accountability falls solely on builders and house owners, exposing them to extreme authorized dangers. If thought of topics, they face a basic drawback: lack of authorized capability, intent, and the power to imagine obligations.

Thus, a 3rd class is important to determine a balanced framework for accountability and legal responsibility—neutral-autonomous standing.

Authorized mechanisms of neutral-autonomous standing

The core precept is that every AI or autonomous system have to be assigned clearly outlined missions that set its goal, scope of autonomy, and authorized framework of accountability. Missions function a authorized boundary that limits the actions of AI and determines accountability distribution.

Courts and regulators ought to consider the habits of autonomous programs based mostly on their assigned missions, guaranteeing structured accountability. Builders and house owners are accountable solely throughout the missions assigned. If the system acts outdoors them, legal responsibility is decided by the particular circumstances of deviation.

Customers who deliberately exploit programs past their designated duties could face elevated legal responsibility.

In circumstances of unexpected habits, when actions stay inside assigned missions, a mechanism of mitigated accountability applies. Builders and house owners are shielded from full legal responsibility if the system operates inside its outlined parameters and missions. Customers profit from mitigated accountability in the event that they used the system in good religion and didn’t contribute to the anomaly.

Hypothetical instance

An autonomous car hits a pedestrian who all of a sudden runs onto the freeway outdoors a crosswalk. The system’s missions: “guarantee protected supply of passengers below site visitors legal guidelines” and “keep away from collisions throughout the system’s technical capabilities” by detecting the space adequate for protected braking.

An injured occasion calls for $10 million from the self-driving automotive producer.

State of affairs 1: Compliance with missions. The pedestrian appeared 11 m forward (0.5 seconds at 80 km/h or 50 mph)—past protected braking distance of about 40 m (131.2 ft.). The automotive started braking however couldn’t cease in time. The court docket guidelines that the automaker was inside mission compliance, so it diminished legal responsibility to $500,000, with partial fault assigned to the pedestrian. Financial savings: $9.5 million.

State of affairs 2: Mission calibration error. At night time, as a result of a digicam calibration error, the automotive misclassified the pedestrian as a static object, delaying braking by 0.3 seconds. This time, the carmaker is responsible for misconfiguration—$5 million, however not $10 million, due to the standing definition.

State of affairs 3: Mission violation by person. The proprietor directed the automotive right into a prohibited building zone, ignoring warnings. Full legal responsibility of $10 million falls on the proprietor. The autonomous car firm is shielded since missions have been violated.

This instance reveals how neutral-autonomous standing buildings legal responsibility, defending builders and customers relying on circumstances.

Impartial-autonomous standing affords enterprise, regulatory advantages

With the implementation of neutral-autonomous standing, authorized dangers are diminished. Builders are shielded from unjustified lawsuits tied to system habits, and customers can depend on predictable accountability frameworks.

Regulators would achieve a structured authorized basis, lowering inconsistency in rulings. Authorized disputes involving AI would shift from arbitrary precedent to a unified framework. A brand new classification system for AI autonomy ranges and mission complexity might emerge.

Corporations adopting impartial standing early can decrease authorized dangers and handle AI programs extra successfully. Builders would achieve better freedom to check and deploy programs inside legally acknowledged parameters. Companies might place themselves as moral leaders, enhancing status and competitiveness.

As well as, governments would get hold of a balanced regulatory device, sustaining innovation whereas defending society.

Why predictable robotic habits issues

We’re on the edge of mass deployment of humanoid robots and autonomous automobiles. If we fail to determine strong technical and authorized foundations at present, tomorrow, the dangers could outweigh the advantages—and public belief in robotics could possibly be undermined.

An structure constructed on mission and topic hierarchies, mixed with neutral-autonomous standing, is the inspiration upon which the following stage of predictable robotics can safely be developed.

This structure has already been described in a patent utility. We’re prepared for pilot collaborations with producers of humanoid robots, autonomous automobiles, and different autonomous programs.

Editor’s notice: RoboBusiness 2025, which shall be on Oct. 15 and 16 in Santa Clara, Calif., will function session tracks on bodily AI, enabling applied sciences, humanoids, discipline robots, design and growth, and enterprise finest practices. Registration is now open.

Concerning the creator

Zhengis Tileubay is an unbiased researcher from the Republic of Kazakhstan engaged on points associated to the interplay between people, autonomous programs, and synthetic intelligence. His work is targeted on growing protected architectures for robotic habits management and proposing new authorized approaches to the standing of autonomous applied sciences.

In the middle of his analysis, Tileubay developed a habits management structure based mostly on a hierarchy of missions and interacting topics. He has additionally proposed the idea of the “neutral-autonomous standing.”

Tileubay has filed a patent utility for this structure entitled “Autonomous Robotic Conduct Management System Based mostly on Hierarchies of Missions and Interplay Topics, with Context Consciousness” with the Patent Workplace of the Republic of Kazakhstan.